Advertising Intelligence: What It Is and How Growth Teams Use It

A practical 2026 guide to advertising intelligence, including data sources, competitor ad workflows, use cases, quality checks, and how growth teams turn signals into decisions.

Advertising intelligence turns competitor ad evidence into creative briefs, channel decisions, landing page tests, and monitoring workflows.

By the AdMapix Research Desk - Updated April 16, 2026

Advertising intelligence is the practice of collecting, organizing, and interpreting ad market signals so a team can make better campaign decisions. It includes competitor ads, creative patterns, landing pages, channel mix, keyword intent, app store signals, spend movement, messaging changes, and the gap between what a competitor promises and what their funnel actually supports.

That definition matters because "ad intelligence" is often used loosely. Some teams mean a spy tool. Some mean advertising analysis. Some mean ad analytics inside their own accounts. Some mean digital advertising intelligence or digital ad intelligence across search, social, display, video, mobile, and app ecosystems. A useful advertising intelligence workflow connects all of those signals, but it does not confuse them.

This guide explains what advertising intelligence is, what data sources matter, how growth teams use it, and how to turn competitor ad intelligence into actions without copying blindly. If you need platform-specific guides, start with our Google Ads Transparency Center guide, Facebook Ads Library guide, and TikTok Creative Center tutorial. If you need ready research outputs, use AdMapix reports.

What Advertising Intelligence Means

Advertising intelligence is the decision layer between raw ad data and campaign action.

Raw data says:

| Raw signal | What it shows |

|---|---|

| A competitor launched 20 new ads | Creative volume changed. |

| The same hook keeps running | The advertiser may have found a useful message. |

| Landing pages changed | The offer, positioning, or audience may have shifted. |

| Search ad copy repeats a phrase | The phrase may match high-intent demand. |

| App store screenshots changed | The team may be improving conversion, not just buying traffic. |

| A creative appears across multiple markets | The concept may travel better than local variants. |

Advertising intelligence asks the next question: what should we do with that evidence?

| Intelligence question | Possible decision |

|---|---|

| Which hooks are competitors repeating? | Brief a different angle or test a stronger version. |

| Which offers are getting more visible? | Review pricing, bundles, lead magnets, or trial flow. |

| Which channels are competitors prioritizing? | Decide whether to test, avoid, or monitor that channel. |

| Which claims create trust risk? | Build safer proof, screenshots, or disclaimers. |

| Which landing pages match ads well? | Improve message match before increasing spend. |

This is why good ad intelligence is not only a database. It is a workflow for turning market evidence into better briefs, better tests, and better monitoring.

Advertising Intelligence Vs Ad Analytics

Advertising intelligence and ad analytics overlap, but they answer different questions.

| Area | Advertising intelligence | Ad analytics |

|---|---|---|

| Main question | What is happening in the market and competitor landscape? | What happened inside our own campaigns? |

| Data source | Public ads, ad libraries, SERPs, landing pages, stores, creative libraries, market signals | First-party platform data, attribution, CRM, analytics events |

| Best use | Competitor research, creative strategy, positioning, channel discovery, market monitoring | Optimization, reporting, forecasting, budget control, performance diagnosis |

| Typical output | Swipe file, pattern map, competitor teardown, creative brief, monitoring alert | Dashboard, KPI report, attribution model, cohort analysis |

| Risk | Copying competitors without understanding context | Optimizing only past performance and missing market shifts |

Ad analytics tells you what your campaigns did. Advertising intelligence helps you understand what the market is doing and what you should test next.

Strong teams combine both:

- Use advertising intelligence to define hypotheses.

- Use ad analytics to validate whether the hypotheses work for your account.

- Feed the result back into the next competitor read.

For example, competitor research may show that many SaaS competitors are testing "migration from legacy tools" messaging. Your ad analytics may show that your own migration landing page has poor conversion. The correct response is not "copy the competitor." It is "test whether the message works after improving proof, offer, and landing page match."

Core Advertising Intelligence Data Sources

No single source gives a complete view. Digital advertising intelligence usually combines multiple source types, and the value of ad intelligence data depends on how clearly the team can connect those sources to decisions.

| Source type | What it can reveal | What it cannot fully prove |

|---|---|---|

| Public ad libraries | Active creatives, messaging, formats, page-level activity | Exact spend, ROI, conversion quality |

| Search results and transparency surfaces | Search ad copy, keyword intent, landing page patterns | Full bidding strategy or budget |

| Creative centers | Popular formats, category examples, short-form pacing | Whether a specific competitor is profitable |

| App stores and product pages | Conversion proof, screenshots, ratings, positioning | Exact paid traffic quality |

| Landing pages | Offer, funnel structure, proof, pricing, CTA | Back-end conversion rate |

| Social channels and creators | Audience reaction, community proof, content-market fit | Attribution without tracking |

| First-party ad analytics | Your performance, cost, events, cohorts | Competitor strategy |

Useful public sources include Google Ads Transparency Center, Meta's Ad Library help resource, TikTok Creative Center, and Apple Ads for App Store advertising context. These sources are helpful because they show real market artifacts, but they still require interpretation.

The key limitation is important: seeing an ad does not prove that the ad is profitable. Longevity, repetition, cross-channel usage, landing-page alignment, and store changes are stronger signals than a single screenshot.

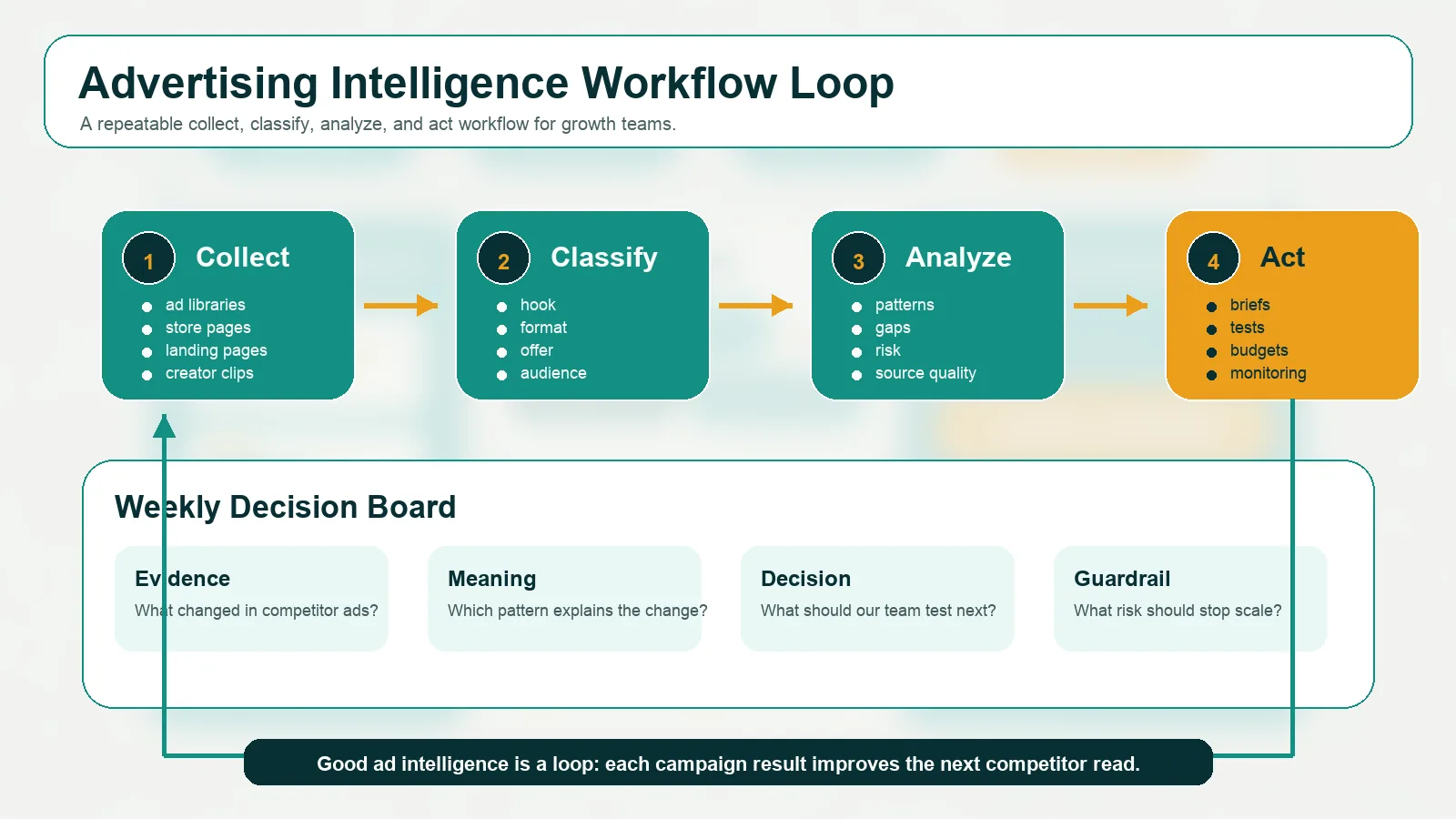

The Workflow: Collect, Classify, Analyze, Act

A useful ad intelligence workflow turns scattered ad examples into a weekly decision board.

1. Collect Evidence

Collect competitor evidence before interpreting it.

Minimum collection list:

| Evidence | What to capture |

|---|---|

| Ads | Screenshot, video, copy, CTA, format, start date when available |

| Landing pages | URL, headline, offer, proof, form, pricing, page structure |

| Store pages | Screenshots, app preview, title, subtitle, ratings, reviews |

| Search results | Keyword, ad copy, visible competitors, landing pages |

| Creator content | Hook, creator type, audience reaction, product proof |

| Change history | What changed this week compared with last week |

Do not over-collect. A small, clean dataset with tags is better than a huge folder of screenshots nobody can use.

2. Classify The Signals

Classification turns examples into analysis-ready data.

Use tags like:

| Tag group | Examples |

|---|---|

| Channel | Search, paid social, TikTok, Meta, YouTube, display, app store, creator |

| Format | UGC, demo, comparison, product tour, playable, carousel, static, testimonial |

| Hook | Pain point, challenge, outcome, savings, speed, trust, fear, curiosity |

| Offer | Trial, discount, free tool, bundle, demo, report, pre-registration |

| Proof | Customer quote, review, product screenshot, benchmark, creator reaction, rating |

| Funnel stage | Awareness, consideration, conversion, retention, win-back |

| Risk | Overclaim, weak proof, policy risk, mismatch, generic claim |

For mobile ad intelligence, add app-specific tags such as store page match, playable, rewarded video, app event, pre-registration, in-app purchase, retention hook, and review risk. For B2B ad intelligence, add persona, company size, pain point, integration, migration, and demo intent.

3. Analyze Patterns

Advertising analysis should not stop at "this ad looks good." Ask structured questions:

| Question | Why it matters |

|---|---|

| Which hooks repeat across different competitors? | Repetition suggests category demand or creative convention. |

| Which hooks are missing? | Gaps can become differentiated tests. |

| Which landing pages support the ad promise? | Message match affects conversion quality. |

| Which offers changed recently? | Offer changes may reveal pressure, seasonality, or repositioning. |

| Which formats are overused? | Overuse can create fatigue or an opportunity for contrast. |

| Which claims feel risky? | Risky claims may win attention but hurt trust or compliance. |

The goal is to explain movement. If a competitor launches 50 ads, the action is not automatically to launch 50 ads. The action is to understand whether they are testing new positioning, scaling a known winner, entering a new audience, refreshing fatigue, or supporting a new product release.

4. Act On The Decision

Every ad intelligence review should produce one of four outputs:

| Output | Example |

|---|---|

| Creative brief | "Test proof-led comparison ads against generic feature ads." |

| Channel decision | "Monitor TikTok for two more weeks before allocating test budget." |

| Landing page test | "Build a page that matches the competitor's migration angle, but with stronger proof." |

| Monitoring rule | "Alert when three direct competitors repeat the same offer or claim." |

If a review produces only screenshots, it is not intelligence yet.

What Growth Teams Can Learn From Ad Intelligence

Advertising intelligence helps teams answer practical questions.

| Team question | Ad intelligence answer |

|---|---|

| What should we test next? | Look for repeated competitor hooks, gaps, and landing-page mismatches. |

| Which channels deserve attention? | Compare competitor activity across search, social, app, video, creator, and display surfaces. |

| What does the market think is valuable? | Review the offers, claims, benefits, and proof competitors repeat. |

| How should we position against alternatives? | Find overused language and build a more specific promise. |

| Are we missing a category trend? | Monitor changes in creative format, offer, persona, and funnel stage. |

| Is a competitor increasing pressure? | Look for creative volume, channel expansion, landing page updates, and offer repetition. |

For example, an ecommerce team might discover that competitors are shifting from generic discounts to product-bundle messaging. A SaaS team might see a wave of migration ads. A mobile app team might notice that rivals are updating store screenshots to match paid ads. A game studio might see that competitors have moved from cinematic trailers to direct gameplay proof. Each finding should become a testable brief.

Use Cases By Team Type

SaaS And B2B Teams

B2B teams use advertising intelligence to track positioning, persona targeting, proof, and offers.

Useful questions:

| Question | What to inspect |

|---|---|

| Which persona is being targeted? | Job titles, pain points, use-case language |

| Which proof is emphasized? | Customer logos, benchmarks, testimonials, integrations |

| Which funnel action is used? | Demo, trial, webinar, report, calculator |

| Which competitors changed messaging? | Landing page updates, ad copy shifts, offer changes |

The best output is often a sharper landing page or comparison angle, not a copied ad.

Ecommerce Teams

Ecommerce teams use ad intelligence to understand product angles, offer patterns, bundles, urgency, creative formats, and landing-page structure.

Useful tags:

| Tag | Examples |

|---|---|

| Product angle | Before/after, problem-solution, gift, routine, social proof |

| Offer | Discount, bundle, free shipping, subscription, limited drop |

| Proof | Review count, creator demo, product claim, press mention |

| Funnel | PDP, advertorial, quiz, collection page, checkout offer |

The danger is copying a visible creative without knowing margin, inventory, or repeat-purchase economics. Treat competitor ads as hypotheses, not instructions.

Apps And Mobile Growth Teams

Mobile ad intelligence connects paid creatives, app store pages, ratings, keyword intent, and post-install quality.

Key checks:

| Check | Why it matters |

|---|---|

| Does the ad match the store page? | Store mismatch reduces conversion and trust. |

| Does the promise match first-session experience? | Misleading promises hurt retention. |

| Which screenshots changed? | Store teams may be improving conversion around a paid angle. |

| Which app categories repeat the same hook? | Shared hooks may reveal category demand. |

If you work in games, pair this page with our mobile game ads guide and video game marketing guide.

Agencies And Competitive Strategy Teams

Agencies use advertising intelligence to make client recommendations defensible.

Instead of saying "competitors are doing more TikTok," a strong report says:

| Weak observation | Stronger intelligence |

|---|---|

| "Competitors run video ads." | "Three direct competitors shifted from static product proof to creator-led demos in the last 14 days." |

| "Their landing page is better." | "Their ad headline, hero section, and CTA all repeat the same migration promise." |

| "We should test discounts." | "Discounts are visible, but competitor ads with bundle proof have more durable repetition." |

This makes the recommendation easier to trust.

Tool And Data Quality Checklist

This article is not a ranking of ad intelligence tools; that is a separate search intent. But any ad intelligence software or platform should be judged by data quality and workflow fit.

Use this checklist:

| Requirement | Why it matters |

|---|---|

| Source coverage | Search, social, display, video, mobile, app stores, or the channels that matter to you |

| Freshness | Stale ads mislead campaign planning |

| Creative detail | Teams need video, copy, CTA, format, landing URL, and metadata when available |

| Filtering | Market, language, platform, competitor, date, format, and category filters save time |

| Tagging | Without tags, research becomes a screenshot archive |

| Export and sharing | Briefs and reports need to move across marketing, creative, and leadership |

| Repeat monitoring | One-time research is useful, but weekly change detection is stronger |

| Context notes | The tool should support human interpretation, not only data collection |

AdMapix is built around this workflow: discover competitor ads, organize patterns, and turn findings into reports your team can act on. Start with reports, or review pricing if you need ongoing monitoring.

Common Mistakes

Mistake 1: Treating Visibility As Profitability

An ad being visible does not prove it is profitable. Look for stronger signals: repetition, longevity, landing-page alignment, cross-channel use, store updates, and whether the promise fits the product.

Mistake 2: Copying The Surface

Copying a competitor's color, format, or hook without understanding context creates weak work. The better move is to identify the mechanism behind the ad and build an original test.

Mistake 3: Ignoring Landing Pages

Ads and landing pages are one system. If competitor ad copy promises "faster migration," inspect whether the landing page proves speed. If your team tests the same angle with a generic page, the test is not fair.

Mistake 4: Mixing Ad Analytics With Market Intelligence

Your internal dashboard can tell you what happened in your account. It cannot tell you what competitors are testing this week. Keep both views active.

Mistake 5: Failing To Write The Decision

Every review should end with a decision: test, monitor, pause, brief, rewrite, or ignore. If the output is only "interesting examples," the workflow is incomplete.

FAQ

What is advertising intelligence?

Advertising intelligence is the practice of collecting and analyzing market ad signals such as competitor creatives, landing pages, channel activity, offers, and messaging so teams can make better campaign decisions.

What is the difference between advertising intelligence and ad analytics?

Ad analytics focuses on your own campaign performance. Advertising intelligence focuses on the broader market and competitor landscape. Strong teams use both: intelligence to generate hypotheses and analytics to validate them.

What data sources do ad intelligence tools use?

They may use public ad libraries, search results, creative centers, landing pages, app stores, social content, first-party data, and human tagging. Source coverage varies by tool and market.

Is it legal to research competitor ads?

Teams can generally review public ad examples and public landing pages, but they should not misuse private data, violate platform terms, or copy protected creative. This article is not legal advice; review relevant policies and laws for your market.

How often should a team review advertising intelligence?

For active categories, weekly reviews are useful. For slower markets, monthly reviews may be enough. The cadence should match how often competitors change creative, offers, and channels.

What should a good ad intelligence workflow produce?

It should produce creative briefs, channel decisions, landing-page tests, monitoring rules, and clear hypotheses for your analytics team to validate.

Bottom Line

Advertising intelligence is not a screenshot collection. It is a repeatable way to understand what competitors are saying, where they are saying it, what evidence supports it, and what your team should test next.

Use competitor evidence to sharpen strategy, not to copy blindly. The winning workflow is collect, classify, analyze, act, and then use your own ad analytics to decide what deserves scale.

If your next step is tactical, use the search ads intelligence guide for Google PPC research, the best ad intelligence tools comparison for vendor selection, and ad tracking for competitive research for weekly monitoring.

See what competitors are really running

Search 6M+ ad creatives, landing pages, and weekly spend across 200+ countries. No credit card, no commitment.

Related Articles

Video Ad Production at Scale: AI Tools, Templates, and Testing Frameworks for 2026

A practical guide to scaling video ad production: AI generation tools that actually work, template systems for rapid iteration, platform-specific format requirements, and how to build a testing flywheel that improves performance with every batch.

Retargeting Ads Strategy in 2026: Competitor Analysis, Segmentation, and Creative Testing

A data-driven retargeting ads strategy combining competitor intelligence, audience segmentation, and creative testing. Learn what competitors run for retargeting and how to build a testable retargeting workflow.

LinkedIn Ads Competitor Research: How to Analyze B2B Rivals in 2026

A systematic approach to LinkedIn Ads competitor research: use the LinkedIn Ads Library, infer targeting from ad copy patterns, analyze B2B landing pages, and turn findings into testable LinkedIn campaign hypotheses.