30 Days of AdMapix: What We Learned About Ad Intelligence in 2026

A 30-day recap of ad intelligence workflows, ad spy tools, transparency libraries, app ads, game ads, and competitor research lessons for 2026.

The 30-day series was designed as a connected ad intelligence map, not a pile of isolated tool reviews.

30 Days of AdMapix: What We Learned About Ad Intelligence in 2026

Ad intelligence in 2026 is not just "find a competitor ad and copy it." That workflow is too shallow, too risky, and usually wrong.

After building a 30-day content cluster around ad spy tools, Facebook Ads Library, Google Ads Transparency Center, app ads, game ads, competitor workflows, and saved reports, one pattern became clear: the teams that benefit from ad intelligence are not the teams with the biggest screenshot folder. They are the teams that can turn competitor research insights into repeatable decisions.

This recap summarizes what we learned across the series:

| Cluster | What it taught us |

|---|---|

| Tool comparison | Buyers want alternatives, but they need workflow fit more than feature lists |

| Competitor ad research | Public data is useful only when saved with context |

| Meta Ad Library | Strong for discovery, limited for performance proof |

| Google Ads | Auction data and transparency data answer different questions |

| App and game ads | Vertical context matters more than generic swipe files |

| Reports | Saved views turn research from memory into an operating system |

| SEO | Each page should own a distinct search intent, not cannibalize the blog |

If you are new to the cluster, start with best ad spy tools in 2026, then read how to spy on competitors' ads. If you are comparing legacy PPC tools, see SpyFu alternatives.

What This 30-Day Series Covered

The series had one strategic goal: make AdMapix visible across the full journey from keyword research to competitor ad monitoring.

That meant writing for different search intents:

| Intent | Example topic | Why it matters |

|---|---|---|

| Tool comparison | SpyFu alternatives, best ad spy tools | Captures buyers comparing platforms |

| How-to workflow | How to spy on competitors' ads | Captures users who know the problem but not the process |

| Platform guide | Facebook Ads Library complete guide | Captures long-tail platform searches |

| Asset preservation | Download videos from Meta Ads Library | Captures practical pain points after discovery |

| Public transparency | Google Ads Transparency Center | Captures Google ad research queries |

| Auction context | Google Ads Auction Insights | Separates private auction data from public ad examples |

| Vertical research | Mobile game ads, app ads, in-game advertising | Captures marketers who need category-specific patterns |

| Reporting | Reports pages and saved competitor views | Turns search traffic into product usage |

The big SEO lesson: a blog should not act like a random content feed. Each article needs a clear job in the cluster. The Facebook Ads Library complete guide should own broad long-tail discovery. The Facebook Ads Library update frequency guide should own freshness and delay questions. The Chinese Facebook 广告资料库 guide should serve a separate language market. The Google Ads Auction Insights comparison should not compete with the Google Ads Transparency Center guide, because their search intent is different.

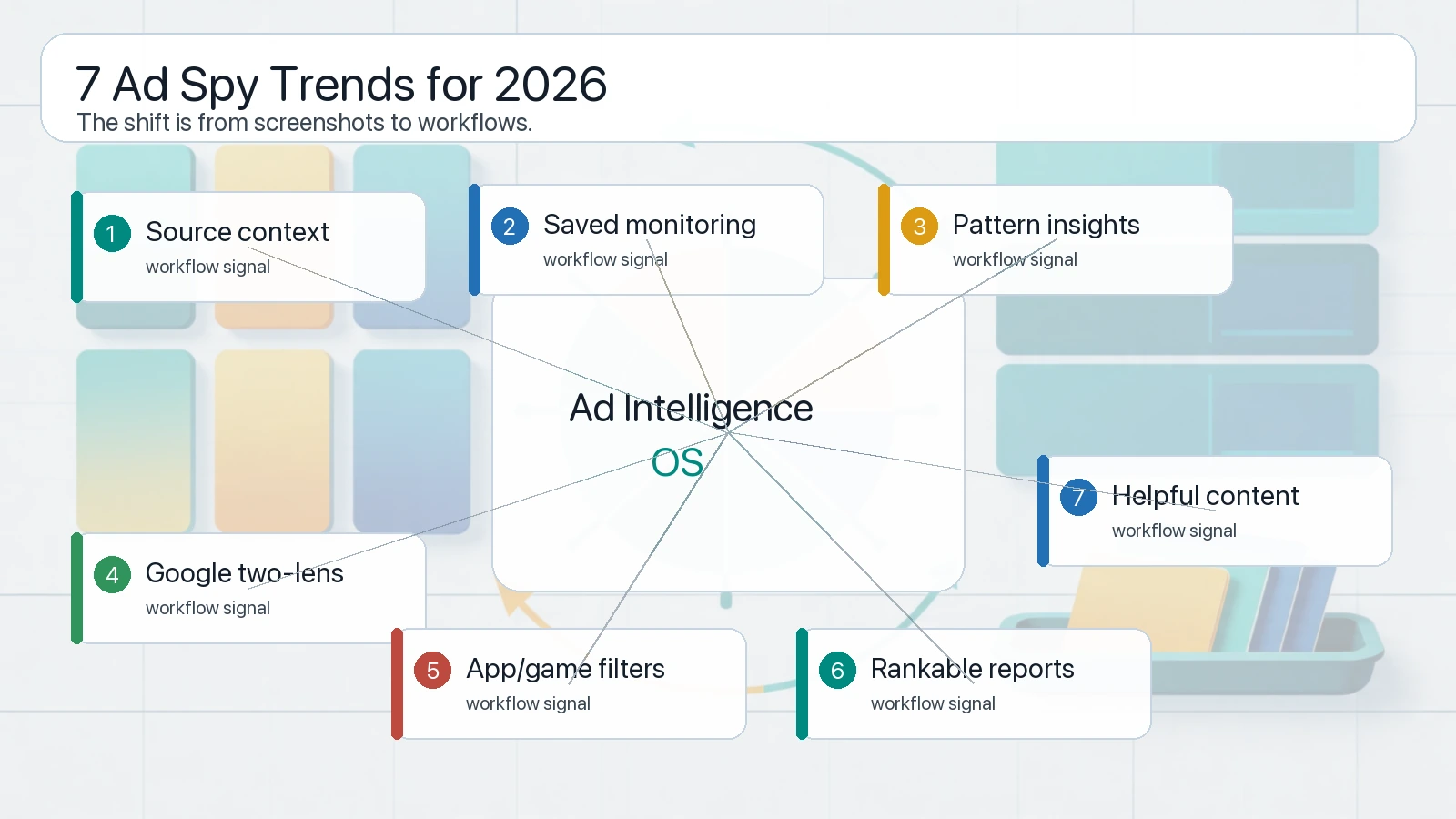

7 Ad Intelligence Trends We Saw Repeatedly

The strongest ad spy trends in 2026 are workflow trends: source context, saved reports, vertical filters, and testable briefs.

1. Public transparency data is useful but incomplete

Meta's Ad Library API documents fields such as creative content, Page name, Page ID, delivery dates, and where ads appeared. It also shows that additional spend, impression, targeting, and demographic fields depend on ad type or region.

Google's Ads Transparency Center launch post describes a public hub that helps users see ads from verified advertisers, including region, format, and last shown date. Google's Safety Center also frames the center as part of advertiser transparency.

These sources are valuable. They are not the same as performance data.

The practical lesson: public libraries help you see what is visible, not what is profitable.

2. Ad intelligence is shifting from search to monitoring

Old-school ad spying was search-heavy: enter a keyword, inspect a few ads, save screenshots. That still works for quick inspiration, but it breaks when teams need a repeatable process.

In 2026, the better workflow is monitoring-heavy:

| Old workflow | Better workflow |

|---|---|

| Search once | Save competitor sets |

| Screenshot manually | Archive examples with metadata |

| Copy the ad | Extract the testable pattern |

| Share in chat | Save in a report |

| Check when remembered | Review on a weekly cadence |

This is why AdMapix reports matter. The report is not just an output page. It is the memory layer for competitor research.

3. The best insights are pattern-based, not ad-based

A single ad can be misleading. It may be new, paused, unprofitable, experimental, localized, or shown only in a narrow segment.

A pattern is stronger:

| Weak observation | Stronger pattern |

|---|---|

| Competitor used a discount | Three competitors repeat trial-first pricing |

| One video uses a hook | Multiple ads open with the same pain point |

| A brand changed color | Several creatives now emphasize social proof |

| A headline looks aggressive | The whole category is shifting to comparison claims |

Good ad intelligence platforms should help teams move from "I saw an ad" to "we found a repeated market pattern worth testing."

4. Google competitor research needs two lenses

Google Ads research is easy to misunderstand.

Google's Auction Insights help page explains that Auction Insights compares your performance with other advertisers participating in the same auctions as you. That makes it excellent for auction pressure, but it does not show public creative examples.

Google Ads Transparency Center can show public ads from verified advertisers, but it does not show your private impression share, overlap rate, outranking share, or campaign economics.

The 2026 lesson: Google competitor research needs both auction context and public creative context. Treating either one as the full truth creates bad strategy.

5. App and game marketers need vertical filters

Generic ad spy workflows often miss what app and game teams actually care about:

| App/game question | Why generic tools struggle |

|---|---|

| Is this a playable ad, video ad, or store listing angle? | Format context is often flattened |

| Which countries or stores are relevant? | Market context is often missing |

| Is the creative tied to a feature, event, or monetization loop? | Vertical semantics are not labeled |

| Does the ad imply install intent or re-engagement intent? | Funnel stage is rarely explicit |

That is why app-focused and game-focused pages are not just SEO side quests. They define a more specific product expectation for the ad intelligence platform.

6. SEO pages can support ranking pages and product pages at the same time

The user goal for /reports and /r/* pages is correct: reports should participate in Google ranking when they contain useful, indexable, non-duplicate value.

The blog's role is to create intent coverage. The reports' role is to show concrete research artifacts. When the two link together cleanly, they support each other:

| Asset | SEO job |

|---|---|

| Blog guide | Explain the method and own informational search intent |

| Report page | Show specific examples, datasets, or curated research |

| Category page | Organize clusters |

| Product page | Convert users with recurring need |

This is why we avoid making every query point to the homepage. Brand terms can be homepage-led, but non-brand research intent should have its own rankable URLs.

7. Helpful content beats generic tool lists

The ad intelligence SERP is crowded with listicles. Most are interchangeable.

The pages with a better chance in 2026 have:

| Quality signal | What it looks like |

|---|---|

| Search intent match | The opening directly answers the query |

| Source awareness | Official docs are linked and limits are explained |

| Original framing | The article adds a decision framework, not just definitions |

| Workflow detail | Readers can repeat the process |

| Internal linking | Related intent pages are connected |

| Visual support | Images clarify the system rather than decorate the page |

| Product fit | CTA follows naturally from the workflow |

This is the bar future articles should keep.

What Tools Won

The tools that won in our 30-day review were not simply the tools with the biggest databases.

They had five traits:

| Winning trait | Why it matters |

|---|---|

| Source transparency | Users need to know where the ad came from |

| Saved monitoring | Teams need recurring research, not one-time screenshots |

| Creative context | Copy, format, landing angle, and platform matter together |

| Vertical filtering | App, game, ecommerce, B2B, and local ads require different lenses |

| Exportable reports | Insights need to move into briefs, meetings, and tests |

This is also the product lesson for AdMapix: the defensible value is not just collection. It is organization, interpretation, and repeatability.

What Tools Lost

The tools that lost were the ones that encouraged shallow conclusions:

| Losing pattern | Why it fails |

|---|---|

| Screenshot dumps | No source, date, filter, or context |

| Scrape-only dashboards | Lots of examples, little decision support |

| Performance cosplay | Implies winning ads without evidence |

| Generic competitor lists | No market, product, or audience context |

| No saved workflow | Research disappears after the browser tab closes |

The biggest risk is not missing an ad. The biggest risk is building a campaign from a false insight.

A Practical Ad Intelligence Workflow for 2026

Use this operating system:

| Step | Output |

|---|---|

| 1. Define the research question | Competitor, market, format, offer, or channel |

| 2. Choose the source | Meta Library, Google Transparency, Auction Insights, app stores, ad reports |

| 3. Capture source context | URL, date, country, platform, advertiser, format |

| 4. Group repeated patterns | Hook, offer, audience, proof, CTA, visual style |

| 5. Write a test brief | Original hypothesis, not direct copy |

| 6. Save the report | Make the research reusable |

| 7. Review cadence | Weekly for active markets, monthly for slower categories |

| 8. Compare with your own data | Only your own tests prove performance |

This workflow is simple enough for a small team and rigorous enough for a growth team.

Predictions for the Next 12 Months

These are practical expectations, not guarantees:

| Prediction | Why we expect it |

|---|---|

| Reports become more important | Teams need shareable research artifacts, not just ad search |

| Public transparency tools stay useful but limited | Platforms expose visibility data, not full economics |

| App and game ad research gets more specialized | Creative formats and funnels are too category-specific |

| SEO and product pages converge | Rankable reports can become both content and acquisition assets |

| Teams ask for freshness proof | Capture time and last-seen signals matter more |

| Copycat tactics get weaker | Helpful content and ad platforms both reward better context |

| AI-assisted research needs source grounding | Summaries without source links will not be trusted |

FAQ

What is ad intelligence in 2026?

Ad intelligence is the process of collecting, organizing, and interpreting competitor ad signals so a team can make better creative, channel, and positioning decisions. In 2026, the strongest workflows combine public ad libraries, auction context, saved reports, vertical filters, and original testing.

Are ad spy tools still useful?

Yes, but only when used as research systems. A tool that only shows screenshots is less useful than a workflow that preserves source, date, market, format, and repeated patterns.

What is the difference between ad intelligence and ad copying?

Ad intelligence identifies patterns and turns them into original hypotheses. Ad copying takes a visible ad and imitates it directly. Copying is risky, often inaccurate, and rarely defensible.

Which channels matter most for competitor research?

Meta and Google remain important because they offer large public and semi-private research surfaces. App and game marketers also need app store, playable, video, and in-game context.

How should a small team start?

Start with five competitors, one market, one channel, and one weekly review. Save examples in AdMapix reports, tag patterns, and only test ideas that connect to your own offer and data.

Conclusion

The main lesson from 30 days of AdMapix content is that ad intelligence 2026 is a workflow problem.

Tools matter, but the winning system is bigger than a tool list. You need source context, saved reports, vertical filters, clear internal links, and a habit of turning competitor research insights into original tests.

If you want to build that system, start with reports and choose the monitoring volume that fits your team on pricing.

See what competitors are really running

Search 6M+ ad creatives, landing pages, and weekly spend across 200+ countries. No credit card, no commitment.

Related Articles

Cross-Channel Ad Attribution in 2026: A Practical Guide for Performance Teams

A practical cross-channel ad attribution guide for performance teams: how to compare ROAS across platforms when every platform claims credit differently, build incrementality testing into your workflow, and make budget decisions without perfect data.

Ad Budget Optimization Framework: How to Allocate Paid Media Budget in 2026

A practical ad budget optimization framework for 2026: how to split budget across channels, campaigns, and regions using competitive signals, performance data, and marginal ROAS analysis — not gut feel.

AI Ad Creative Tools in 2026: What Actually Works for Paid Media Teams

A practical guide to AI ad creative tools in 2026: what each tool category actually does, how to integrate AI generation with competitive intelligence, and a workflow that produces testable ad variants instead of generic output.