Case Study: How We Found 10 Winning Facebook Ads in 2 Hours

A practical winning Facebook ads case study showing the filters, scoring system, and ad intelligence workflow we use to find strong ad candidates fast.

A good winning ads workflow uses filters to reduce noise, then scores creative signals before anyone copies an angle.

Case Study: How We Found 10 Winning Facebook Ads in 2 Hours

This winning Facebook ads case study shows a repeatable two-hour workflow for finding strong ad candidates without pretending that a public ad library can prove profit.

That distinction matters. Meta's public Ad Library can show currently running ads, pages, creative, platforms, and other public transparency data. Meta's Ad Library API documents fields such as page name, delivery dates, creative text, media type, platforms, and country filters. But public ad visibility does not show your competitor's CAC, ROAS, margin, attribution model, or creative testing budget.

So in this case study, "winning Facebook ads" means candidate ads with multiple external signals:

| Signal | Why it matters |

|---|---|

| Longevity | An ad that stays active may be clearing an internal performance bar |

| Variation | Multiple similar versions suggest active testing |

| Clear hook | The first frame or first sentence makes the user problem obvious |

| Offer clarity | Users know what they get and why now |

| Format fit | The creative matches the platform and placement |

| Landing-page match | The ad promise is not isolated from the post-click experience |

| Competitor repetition | More than one competitor uses the pattern |

Use this workflow with the Facebook Ads Library complete guide, the guide on how to spy on competitors' ads, and the product research workflow in how to find winning products on Facebook Ads Library.

The Goal

The goal was simple: find 10 Facebook ad candidates worth turning into creative briefs in under two hours.

We were not trying to build a perfect competitor database. We wanted enough evidence to answer four questions:

| Question | Decision |

|---|---|

| Which angles are appearing repeatedly? | Build a swipe file and brief variants |

| Which hooks are easy to understand? | Prioritize scripts and first-frame ideas |

| Which offers are competitors willing to keep live? | Test similar offer framing with our own economics |

| Which formats deserve production budget? | Choose video, carousel, image, UGC, or landing-page tests |

For this ad intelligence case study, we used a strict time box:

| Block | Time |

|---|---|

| Setup and category definition | 15 minutes |

| Competitor and keyword filtering | 35 minutes |

| Creative signal scoring | 45 minutes |

| Pattern grouping and brief notes | 25 minutes |

The time box forces discipline. Without it, competitor research turns into browsing.

Setup: Define the Search Universe

Before opening any tool, define the search universe.

| Setup field | Example choice |

|---|---|

| Category | Mobile app, ecommerce, SaaS, game, or local service |

| Market | One primary country first |

| Competitor set | 5-15 known brands or pages |

| Query terms | Problem, product, benefit, and competitor terms |

| Format focus | Video, image, carousel, playable, lead ad, or UGC |

| Lookback | Active now, last 30 days, or evergreen patterns |

| Exclusions | Brand-only ads, hiring ads, generic PR, irrelevant regions |

For the first run, keep the market narrow. Searching every country and every competitor at once creates a large list but weak decisions.

If you do not know where to start in Meta's tool, use the where is Facebook Ads Library guide. If you need to save examples for later review, use the download videos from Meta Ads Library workflow, but respect platform terms and copyright.

The Filter Process

The fastest way to find winning ads is to filter for evidence before judging taste.

Use this order:

| Step | Filter | Reason |

|---|---|---|

| 1 | Country | Removes irrelevant offers and language |

| 2 | Active status | Shows what competitors are still running |

| 3 | Competitor pages | Anchors research in real players |

| 4 | Keywords | Adds category and problem coverage |

| 5 | Media type | Separates video, image, carousel, and text-heavy ads |

| 6 | Platform | Spots Facebook vs Instagram vs Messenger differences |

| 7 | Start date | Helps find newer tests vs evergreen ads |

Then save candidates into a simple table:

| Field | Why capture it |

|---|---|

| Page | Shows advertiser identity |

| Ad URL or ID | Lets you revisit the exact creative |

| First-frame hook | Useful for scripting |

| Primary promise | What the ad claims |

| Offer | Discount, trial, quiz, demo, bundle, waitlist |

| Format | UGC, founder, animation, product demo, social proof |

| Longevity clue | Whether it appears to have remained active |

| Pattern group | Hook, pain point, offer, audience, objection |

| Score | Prioritizes what to brief |

This is where an ad intelligence tool saves time. Public search is useful, but the work is faster when saved ads, filters, creative metadata, and competitor views are in one place. Use AdMapix reports when you want a cleaner research queue.

Our Scoring Framework

We scored each candidate from 1 to 5 across six dimensions.

| Dimension | What a 5 looks like |

|---|---|

| Hook clarity | A user understands the problem or payoff in one glance |

| Offer strength | The ad gives a concrete reason to act now |

| Format-market fit | The creative style matches how the audience buys |

| Differentiation | The idea is not identical to every competitor |

| Repeat evidence | Similar ads appear across versions, markets, or competitors |

| Briefability | The pattern can be turned into ethical, original creative |

We rejected ads even if they looked polished when:

| Rejection reason | Why |

|---|---|

| The claim looked exaggerated | Hard to use without compliance risk |

| The format depended on a celebrity or trend | Difficult to replicate responsibly |

| The hook was clear but the product fit was weak | Likely to hurt downstream quality |

| The creative was brand-only | Not enough insight for acquisition |

| The ad had no pattern match | Interesting, but not a priority |

This prevents the biggest mistake in competitor research: copying the most visually loud ad instead of extracting the strategic pattern.

The 10 Winning Ad Patterns We Found

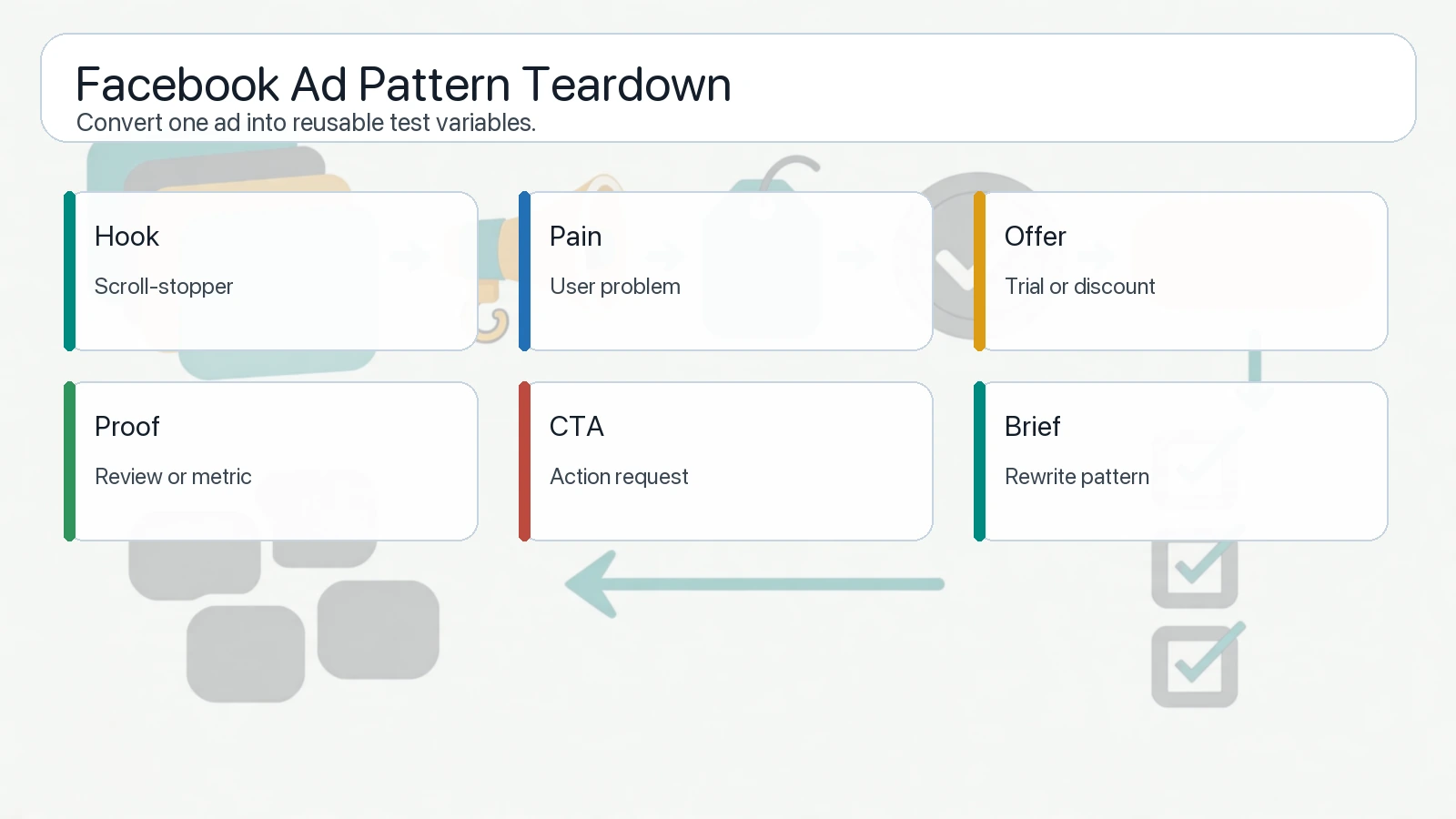

Winning candidates become useful when each ad is translated into a pattern: hook, offer, proof, format, and testable next step.

The final list was not "10 ads to copy." It was 10 patterns worth briefing.

| Pattern | Why it made the list | Test brief |

|---|---|---|

| 1. Problem-first opener | The pain point was visible before the brand | Build 3 first-frame variations around the same pain |

| 2. Before/after product proof | The outcome was easy to understand | Test a realistic transformation sequence |

| 3. Offer with constraint | The reason to act now was concrete | Test deadline vs bonus vs limited access |

| 4. UGC objection answer | The ad answered a user doubt naturally | Script 5 objection-led UGC hooks |

| 5. Side-by-side comparison | Competitor difference was obvious | Build a fair comparison without trademark risk |

| 6. Short demo loop | Product value appeared in under 5 seconds | Cut a product demo around one job |

| 7. Social proof stack | Credibility appeared before the CTA | Test review, usage count, press, or community proof |

| 8. Quiz or diagnosis hook | The ad turned attention into self-selection | Test a quiz landing page or lead magnet |

| 9. Creator-style narrative | The format felt native to the feed | Brief creator variants with stricter proof points |

| 10. Repeated visual metaphor | Multiple competitors used the same mental model | Adapt the metaphor to a different visual system |

The strongest candidates had three things in common:

| Common trait | Why it matters |

|---|---|

| They were easy to explain internally | If a strategist cannot summarize the ad, users may not understand it |

| They connected to a landing-page promise | The post-click path did not feel random |

| They suggested multiple variants | A single ad idea became a testing tree |

Takeaways From the Case Study

The two-hour workflow produced useful creative direction because it separated signal from taste.

| Lesson | Practical implication |

|---|---|

| Longevity is a clue, not proof | Use it to prioritize, not to claim profitability |

| Repetition matters | One ad is an example; repeated patterns are strategy inputs |

| Hooks travel better than visuals | Adapt the message before copying the style |

| Offers need economics | A competitor's discount may not fit your margin |

| Landing pages matter | Winning Facebook ads often have matching post-click journeys |

| Research should end in briefs | A swipe file without next steps has low value |

The most useful output was a backlog of tests:

| Test type | Example |

|---|---|

| Hook test | Pain-first vs outcome-first vs curiosity-first |

| Proof test | Review vs demo vs usage count |

| Format test | UGC vs product demo vs animated explainer |

| Offer test | Trial vs bundle vs urgency vs bonus |

| Audience test | Beginner vs advanced vs switcher |

This is why "how to find winning ads" should not be treated as a screenshot exercise. The real work is turning observed patterns into original tests.

Repeatable Checklist

Use this checklist for your own two-hour run:

| Step | Done |

|---|---|

| Pick one market and one category | Keep the search narrow |

| List 5-15 competitor pages | Include direct and indirect competitors |

| Define 5-10 search terms | Use product, problem, outcome, and competitor language |

| Filter by active ads first | Start with currently running evidence |

| Save at least 30 candidates | Do not judge too early |

| Score candidates with a rubric | Avoid taste-only decisions |

| Group into patterns | Convert ads into reusable ideas |

| Pick 3-5 test briefs | Move from research to production |

| Add landing-page notes | Keep message match intact |

| Review after launch | Compare competitor signal with your own results |

If you need to do this repeatedly, use pricing to choose a workflow that fits your research volume.

FAQ

What is a winning Facebook ad?

A winning Facebook ad is an ad that appears to have strong performance signals and can be turned into a useful test. Public tools cannot prove profit, so use longevity, variation, clarity, offer, and repetition as research signals.

How do you find winning ads?

Start with a narrow market, search competitor pages and category keywords, filter active ads, save candidates, score creative signals, group patterns, and turn the best patterns into original briefs.

Can Facebook Ads Library prove an ad is profitable?

No. Facebook Ads Library can show public ad transparency data, but it does not show CAC, ROAS, margin, attribution, or internal budgets. Treat it as a discovery source, not a profitability report.

What signals matter most?

The strongest signals are clarity, offer relevance, repeated variants, longevity, post-click match, and whether the idea can be adapted ethically to your own product.

How often should I repeat this workflow?

For active paid acquisition teams, repeat it weekly for fast-moving categories and monthly for stable categories. Run it again before major seasonal campaigns.

Conclusion

This winning Facebook ads case study found 10 useful ad patterns in two hours by using filters first, scoring second, and creative briefs third. The point was not to copy competitors. The point was to compress research time and produce better tests.

Use AdMapix reports to repeat the workflow in your niche, compare competitor creative patterns, and turn ad intelligence into a testing queue your team can actually ship.

See what competitors are really running

Search 6M+ ad creatives, landing pages, and weekly spend across 200+ countries. No credit card, no commitment.

Related Articles

Cross-Channel Ad Attribution in 2026: A Practical Guide for Performance Teams

A practical cross-channel ad attribution guide for performance teams: how to compare ROAS across platforms when every platform claims credit differently, build incrementality testing into your workflow, and make budget decisions without perfect data.

Ad Budget Optimization Framework: How to Allocate Paid Media Budget in 2026

A practical ad budget optimization framework for 2026: how to split budget across channels, campaigns, and regions using competitive signals, performance data, and marginal ROAS analysis — not gut feel.

AI Ad Creative Tools in 2026: What Actually Works for Paid Media Teams

A practical guide to AI ad creative tools in 2026: what each tool category actually does, how to integrate AI generation with competitive intelligence, and a workflow that produces testable ad variants instead of generic output.